TL;DR: ElevenLabs is the clear leader in AI voice generation in 2026, with a free tier offering 10,000 characters per month and voice cloning that needs as little as one minute of audio. It's not flawless — pacing and emphasis still slip — but nothing else comes as close to a natural-sounding voice.

How we tested this: Every tool covered in this article was evaluated hands-on by the TalentedAtAI team. We signed up for real accounts, tested core features against actual use cases, and assessed output quality, pricing accuracy, and workflow fit. Our verdicts are independent. Affiliate relationships, where they exist, are disclosed and never influence our ratings.

This article contains affiliate links. We may earn a small commission at no extra cost to you.

There is a moment, the first time you hear a good AI voice reading something you wrote, where your brain does a small double-take. It's not quite surprise (you knew roughly what to expect) but it's something. The voice is clear, paced, alive in a way you didn't fully anticipate. Then you notice it. A phrase lands a little flat. A word gets slightly the wrong emphasis. The moment breaks, and the voice becomes unmistakably artificial again.

That oscillation, between impressively good and slightly off, is probably the most honest way to describe where ElevenLabs sits in 2026. It is the best AI voice tool available, and it is still an AI voice tool. Understanding both of those things is useful before you decide whether it belongs in your workflow.

What ElevenLabs Actually Does

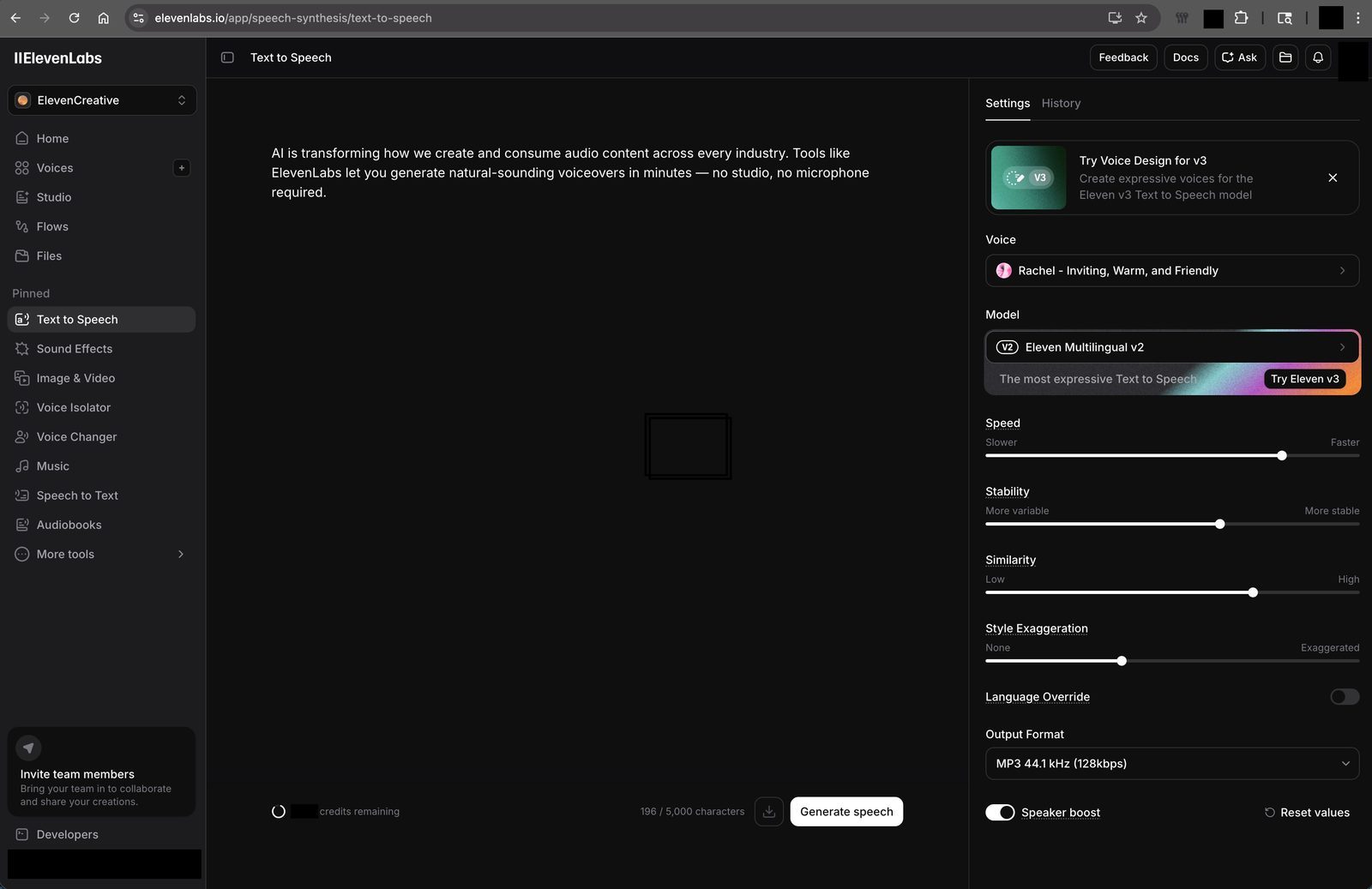

ElevenLabs is a text-to-speech platform, but that description undersells it a little. You paste or upload text, choose a voice from a large library, and the platform generates an audio file that sounds like a person reading it. The quality, in most cases, is significantly better than the robotic text-to-speech you've encountered anywhere else.

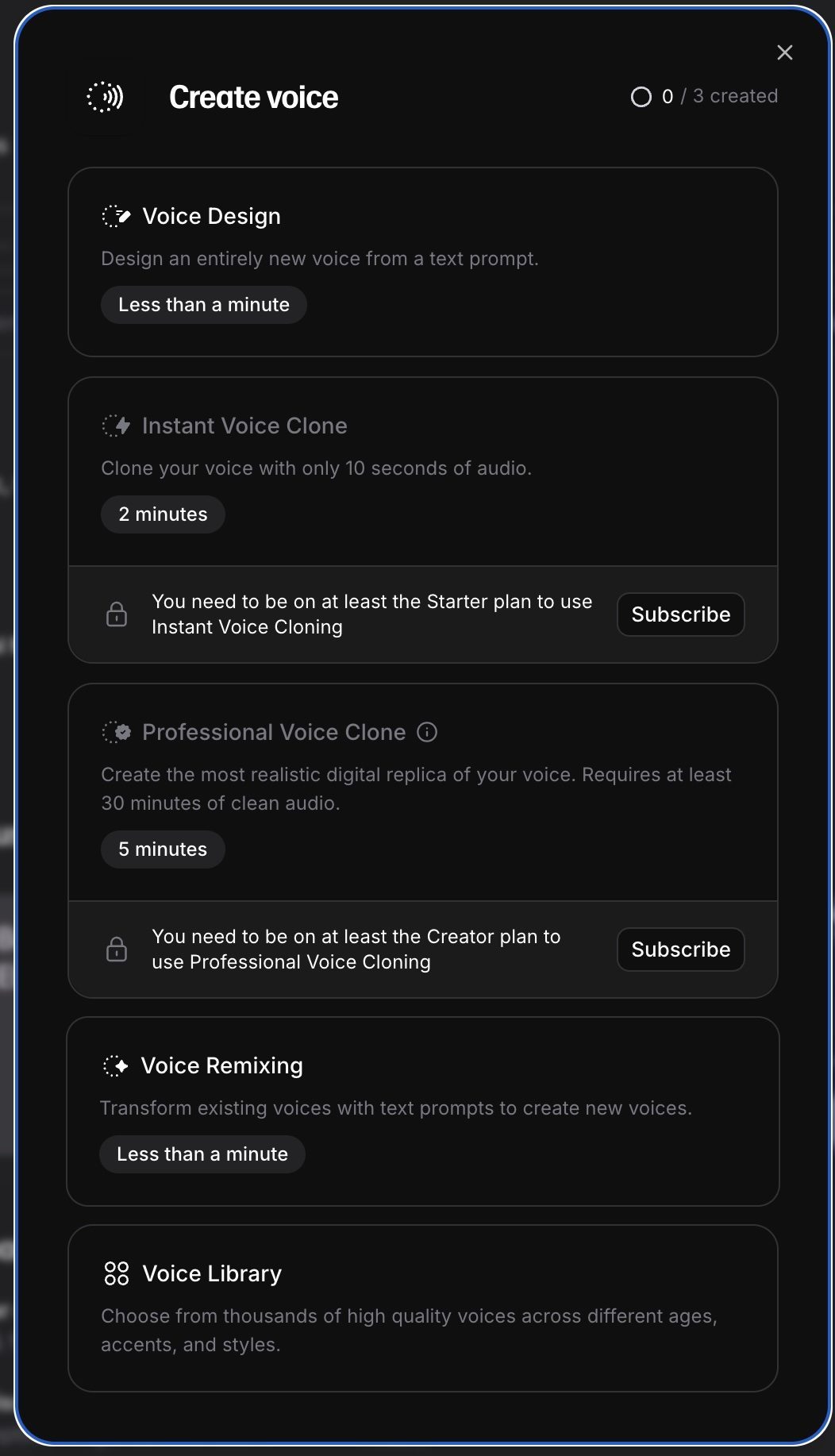

Beyond the pre-built voice library, it also offers voice cloning. You upload a sample of a real voice (your own, for example) and the platform generates a model of it that you can use for future audio. The quality of the clone depends heavily on the length and consistency of the sample you provide, but with a few minutes of clean audio, the results can be startling.

There's also a Dubbing feature, designed for translating videos into other languages while preserving the original speaker's voice characteristics. This has been adopted rapidly by educators, YouTubers with international audiences, and businesses that operate across language markets.

For the creative and business use cases that most people bring to ElevenLabs (turning long articles into audio, creating podcast-style intros, voicing video scripts), it is useful and the output is good enough to share publicly.

Testing It: Voices, Cloning, and the Real Limits

We ran ElevenLabs through a set of practical tests over several weeks.

Pre-built voices: The library includes hundreds of voices with different accents, tones, and styles, and the quality varies more than you'd expect. The best ones are remarkably natural. The worst have a smoothness that feels subtly wrong, like a person speaking in a recording booth who isn't quite sure what the sentence means. Spend time finding voices that fit your content; the difference between a mediocre pick and a good one is significant.

Emotional nuance is where the pre-built voices struggle most. They handle neutral, informational content well. They're less convincing on humour, warmth, or anything that requires a voice to carry emotional weight. You can adjust the settings (stability, clarity, style exaggeration) and with experimentation you can get closer to what you want, but it takes time.

Voice cloning: The results here are the most impressive part of the product. With a clean 3-minute sample, we produced a cloned voice that passed informal listening tests with people who knew the original speaker. They noticed something was off; they couldn't say exactly what. With a longer sample, the gap narrowed further.

The obvious application for most people is consistency: recording your voice once and then using it for every piece of content you produce, without needing to set up a microphone every time. For solo content creators, this is practical in a way it would have seemed impossible a few years ago.

Dubbing: This worked better than expected on simple content, worse than expected on anything fast-paced or with complex audio. Background music bleeds through in inconsistent ways, and lip sync in dubbed video is imperfect. Good enough for some use cases; not yet ready to replace a professional dubbing process.

Who Should Actually Use ElevenLabs

This is where most reviews go vague, so let's be specific.

Podcasters and audio creators get the clearest benefit. If you produce long-form audio content and want a consistent, professional-sounding voice for intros, ads, or templated segments without recording them every time, ElevenLabs solves that problem well.

Bloggers and newsletter writers looking to add an audio version of their content will find the tool easy to use. Paste your article, pick a voice, export. The result won't match a professionally recorded human narration, but it will be good enough for a large share of listeners, and it takes five minutes rather than an hour.

Small businesses with a need for consistent voiceover (explainer videos, customer-facing content, training materials) can realistically replace expensive studio sessions for routine content. The savings are real. The quality trade-off is real too, and worth being honest about with anyone whose expectations you're managing.

Language learners are a growing use case that doesn't get enough attention. Hearing authentic-sounding speech in your target language, including regional accents, is valuable for ear training, and ElevenLabs makes this accessible.

Where it's less useful: anything that requires real emotional performance, character acting, or content where the intimacy of a real human voice is central to the experience. Audiobooks for literary fiction, for example. Counselling or education content where warmth is essential. The AI will technically read the words; the felt experience will be missing.

Pricing and What You Actually Get

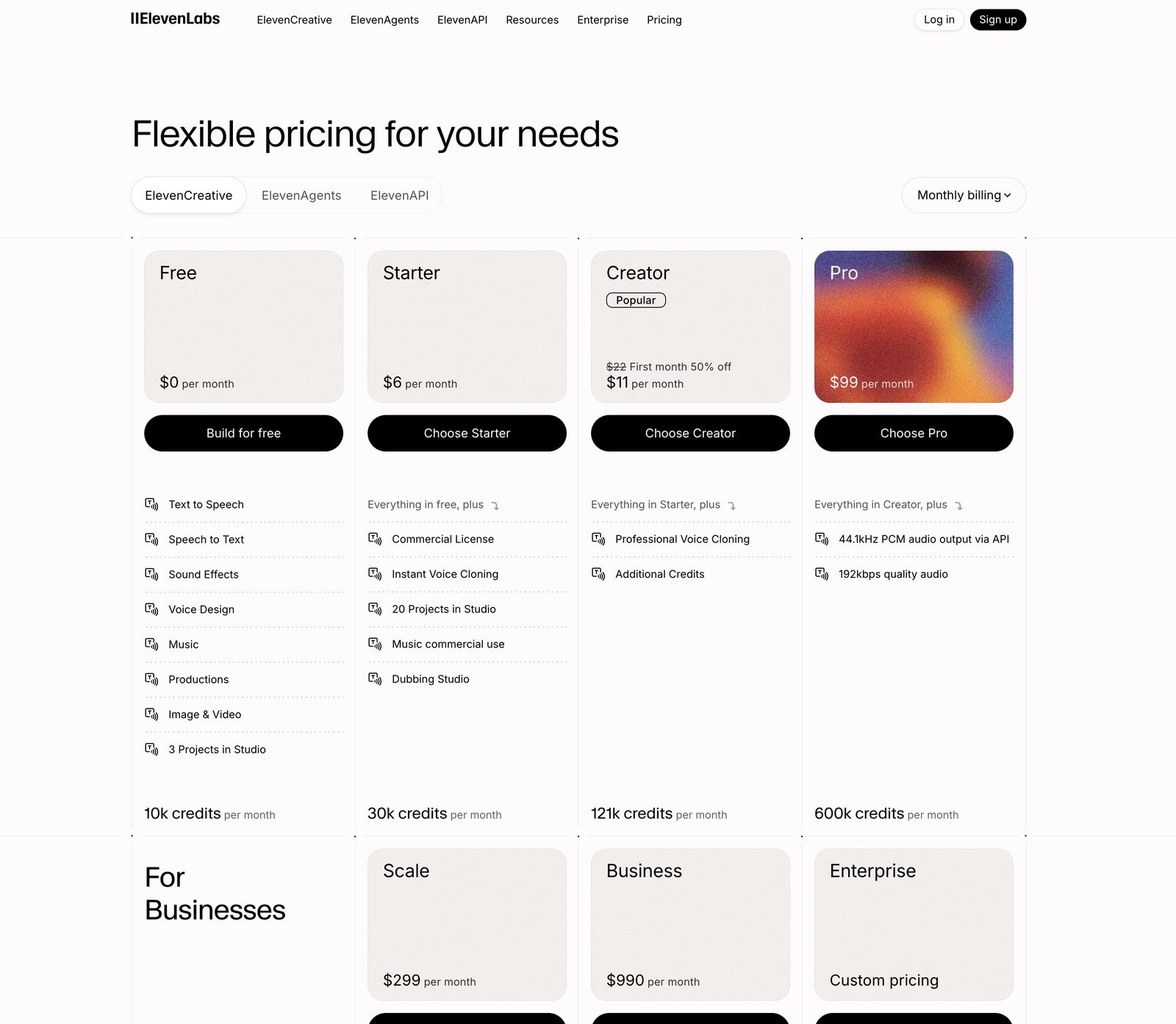

ElevenLabs offers a free tier that gives you around 10,000 characters per month, enough to evaluate the tool properly but not enough for consistent production use. The Starter plan ($5/month) unlocks 30,000 characters and voice cloning, which covers light regular use. The Creator plan ($22/month) brings you 100,000 characters, which is sufficient for most individual creators.

One important note: the character limit refers to input text, not audio duration. A 1,500-word article is roughly 8,000 characters. At the Starter level, that's about three or four articles per month in audio form. Plan accordingly.

The Pro and Scale tiers, aimed at teams and high-volume production, exist and are reasonably priced relative to what they replace. If you're spending meaningful money on professional voiceover currently, the maths of switching usually works in ElevenLabs' favour fairly quickly.

How ElevenLabs Compares to Alternatives

ElevenLabs doesn't exist in a vacuum. Several other tools compete in the AI voice space, and understanding how they compare helps you decide whether ElevenLabs is the right choice for your specific use case.

ElevenLabs vs Murf.ai. Murf is the closest direct competitor, particularly for business and marketing content. Murf's interface is slightly more intuitive for video voiceover work. It integrates a timeline editor that lets you sync voiceover to video clips, which ElevenLabs doesn't natively offer. However, ElevenLabs wins on raw voice quality. In our side-by-side testing, ElevenLabs' best voices sounded noticeably more natural, particularly on longer passages where Murf's voices tend to develop a slightly robotic cadence. If you're primarily doing video voiceover work and want the simplest workflow, Murf is worth considering. For pure audio quality, ElevenLabs leads.

ElevenLabs vs Descript. Descript takes a different approach. It's primarily a podcast and video editing tool that includes AI voice features rather than a dedicated voice platform. Descript's Overdub feature lets you type corrections into a transcript and regenerate just the edited portion in your cloned voice, which is uniquely useful for podcast editing. But Descript's text-to-speech quality for full narration is a step below ElevenLabs'. If your primary use is editing existing recordings and fixing mistakes, Descript's workflow is better. If you're generating audio from text, ElevenLabs produces better results.

ElevenLabs vs Play.ht. Play.ht is a solid mid-tier option with competitive pricing and a good voice library. It's particularly strong for shorter content: social media audio, short video voiceovers, audio ads. For longer narration and voice cloning fidelity, ElevenLabs outperforms it. Play.ht's free tier is more generous than ElevenLabs', making it a reasonable choice if budget is the primary concern.

ElevenLabs vs Amazon Polly / Google Cloud TTS. These cloud-based services are designed for developers integrating speech into applications, not for individual content creators. The quality of their standard voices is below ElevenLabs, though their neural voices have improved. They're significantly cheaper at scale, relevant if you're processing millions of characters per month, but they lack the voice cloning and creative features that make ElevenLabs useful for content production.

Tips for Getting the Best Output

After weeks of testing, these are the practices that consistently produce better results.

Spend time on voice selection. The difference between an okay output and a great one often comes down to the voice you choose. Don't settle for the first one that sounds decent. Listen to at least ten voices reading a paragraph of your actual content. The best voice for a blog post narration is different from the best voice for a video script, and what works for tech content may not work for lifestyle content.

Use the speech settings intentionally. ElevenLabs gives you control over stability (how consistent the voice sounds) and similarity (how closely it matches the selected voice). Lower stability introduces more variation, which sounds more natural for conversational content but less professional for formal narration. Higher similarity produces a more consistent, predictable output. There's no universally correct setting — it depends on the content and the voice.

Format your input text for speech, not for reading. Written text and spoken text have different rhythms. Break long sentences into shorter ones. Add commas where you'd naturally pause when speaking. Replace abbreviations with full words. Remove markdown formatting, links, and parenthetical asides that make sense in text but sound awkward when read aloud. Five minutes of text preparation saves significant cleanup time on the audio output.

Use the Projects feature for long-form content. Rather than generating an entire article or book chapter as one block, use ElevenLabs' Projects to break content into sections. This gives you more control over pacing and lets you regenerate individual sections without re-generating the whole piece. It also makes it easier to use different voices for different roles if your content includes dialogue or multiple perspectives.

The Honest Verdict

ElevenLabs is the best AI voice product available in 2026, and it's worth trying. For specific, high-volume use cases (consistent content creators, small businesses producing regular video or audio, bloggers adding audio versions of their articles), it delivers real value at a price that makes sense.

It is not yet a replacement for human voice work in contexts where emotional authenticity is the point. The gap between a skilled human narrator and ElevenLabs' best output is narrowing every year, but it has not closed. Know what you're using it for, test the free tier before committing, and don't make promises to clients about quality before you've seen the output yourself.

🎙️ Try ElevenLabs Free

The best AI voice generator in 2026. Start free, no credit card needed.

Try ElevenLabs Free →If you've been curious about whether AI voices have crossed some threshold of quality that makes them practically usable, in most cases, for most people's needs, the answer in 2026 is yes.

The API: For Developers and Automators

Beyond the web interface, ElevenLabs offers an API that opens up more advanced use cases. If you're building an application that needs voice (a customer service bot, an educational platform, an accessibility feature), the API gives you programmatic access to the full voice library and cloning capabilities.

The API pricing is based on character usage, with tiers that align roughly with the consumer plans. For developers testing ideas, the free tier's 10,000 characters per month is enough to prototype. For production applications, the Scale tier provides the volume and SLA guarantees that serious products need.

What makes the API particularly interesting is the ability to generate speech in near-real-time for conversational applications. The latency has improved to the point where AI voice responses feel responsive rather than delayed, which matters for anything interactive: phone bots, voice assistants, or live accessibility features.

For non-developers who want to automate their ElevenLabs usage, Zapier integration allows you to connect it to other tools. A workflow that automatically generates an audio version of every blog post you publish, or that voices incoming emails for you to listen to during a commute, is achievable without writing code.